Understanding gRPC Concepts, Use Cases & Best Practices

As we progress with application development, among various things, there is one primary thing we are less worried about i.e. computing power. With the advent of cloud providers, we are less worried about managing data centers. Everything is available within seconds, and that too on-demand. This leads to an increase in the size of data as well. Big data is generated and transported using various mediums in single requests.

With the increase in the size of data, we have activities like serializing, deserializing and transportation costs added to it. Though we are not worried about computing resources, the latency becomes an overhead. We need to cut down on transportation. A lot of messaging protocols have been developed in the past to address this. SOAP was bulky, and REST is a trimmed-down version, but we need an even more efficient framework. That’s where Remote Procedure Calls (RPC) comes in.

In this blog post, we will understand what RPC is and the various implementations of RPC with a focus on gRPC, which is Google’s implementation of RPC. We’ll also compare REST with RPC and understand various aspects of gRPC, including security, tooling, and much more. So, let’s get started!

What is RPC?

RPC stands for ‘Remote Procedure Calls’. The definition is in the name itself. Procedure calls simply mean function/method calls; it’s the ‘Remote’ word that makes all the difference. What if we can make a function call remotely?

Simply put, if a function resides on a ‘server’ and in order to be invoked from the ‘client’ side, could we make it as simple as a method/function call? Essentially what an RPC does is it gives the ‘illusion’ to the client that it is invoking a local method, but in reality, it invokes a method in a remote machine that abstracts the network layer tasks. The beauty of this is that the contract is kept very strict and transparent (we will discuss this later in the article).

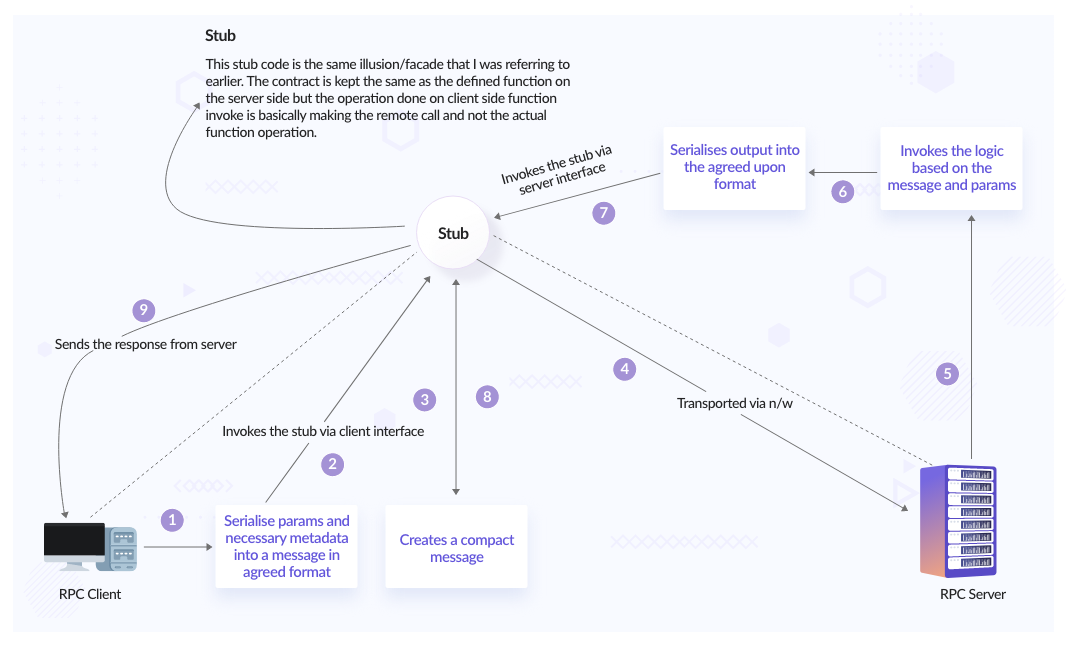

Steps involved in an RPC call:

RPC Sequence Flow

This is how a typical REST process looks like:

RPCs boil down the process to below:

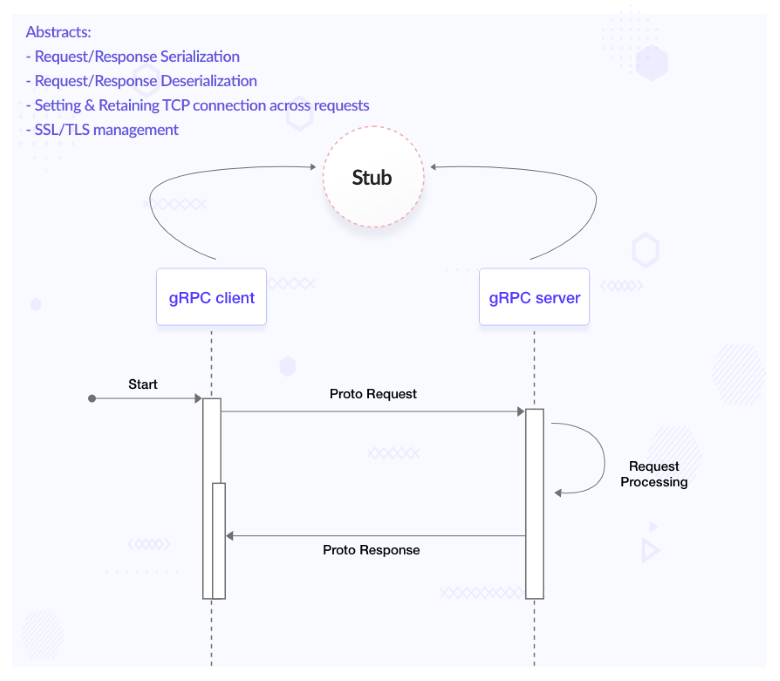

This is because all the complications associated with making a request are now abstracted from us (we will discuss this in code-generation). All we need to worry about is the data and logic.

gRPC - what, why, and how of it

So far, we discussed RPC, which essentially means making function/method calls remotely. Thereby giving us the benefits like ‘strict contract definition’, ‘abstracting transmission and conversion of data’, ‘reducing latency’, etc. Which we will be discussing as we proceed with this post. What we would really like to dive deep into is one of the implementations of RPC. RPC is a concept, and gRPC is a framework based on it.

There are various implementations of RPCs. They are:

- gRPC (Google)

- Thrift (Facebook)

- Finagle (Twitter)

Google’s version of RPC is referred to as gRPC which was introduced in 2015 and has been gaining traction since. It is one of the most chosen communication mechanisms in a microservice architecture.

gRPC uses protocol buffers (it is an open source message format) as the default method of communication between client and server. Also, gRPC uses HTTP/ 2 as the default protocol.

There are again four types of communication that gRPC supports:

- Unary (typical client and server communication)

- Client side streaming

- Server side streaming

- Bidirectional streaming

Coming on to the message format that is being used widely in gRPC - protocol buffers a.k.a protobufs. A protobuf message looks something like below:

message Person {

string name = 1;

string id = 2;

string email = 3;

}

Here, Person is the message we would like to transfer (as a part of request/response), which has fields name (string type), id (string type) and email (string type). The numbers 1, 2, 3 represent the position of the data (as in name, id, and email) when it is serialized to binary format.

Once the developer has created the Protocol Buffer file(s) with all messages, we can use a ‘protocol buffer compiler’ (a binary) to compile the written protocol buffer file, which will generate all the utility classes and methods which are needed to work with the message. For example, as shown in the above Person message, depending on the chosen language, the generated code will look like this.

How do we define services?

We need to define services that use the above messages to be sent/received.

After writing the necessary request and response message types, the next step is to write the service itself. gRPC services are also defined in Protocol Buffers and they use the ‘service’ and ‘rpc’ keywords to define a service.

Take a look at the content of the below proto file:

message HelloRequest {

string name = 1;

string description = 2;

int32 id = 3;

}

message HelloResponse {

string processedMessage = 1;

}

service HelloService {

rpc SayHello (HelloRequest) returns (HelloResponse);

}

Here, HelloRequest and HelloResponse are the messages and HelloService is exposing one unary RPC called SayHello which takes HelloRequest as input and gives HelloResponse as output.

As mentioned, HelloService at the moment contains a single unary RPC. But it could contain more than one RPC. Also, it can contain a variety of RPCs (unary/client-side streaming/server-side streaming/Bidirectional).

In order to define a streaming RPC, all you have to do is prefix ‘stream’ before the request/response argument, Streaming RPCs proto definitions, and generated code.

In the above code-base link:

- streaming.proto: this file is user defined

- streaming.pb.go & streaming_grpc.pb.go: these files are auto-generated on running proto compiler command command.

gRPC vs REST

We did talk about gRPC a fair bit. Also, there was a mention of REST. What we missed was discussing the difference. I mean when we have a well-established, lightweight communication framework in the form of REST, why was there a need to look for another communication framework? Let us understand more about gRPC with respect to REST along with the pros and cons of each of it.

In order to compare what we require are parameters. So let’s break down the comparison into the below parameters:

-

Message format: protocol buffers vs JSON

- Serialization and deserialization speed is way better in the case of protocol buffers across all data sizes (small/medium/large). Benchmark-Test-Results.

- Post serialization JSON is human readable while protobufs (in binary format) are not. Not sure if this is a disadvantage or not because sometimes you would like to see the request details in the Google developers tool or Kafka topics and in the case of protobufs you can’t make out anything.

-

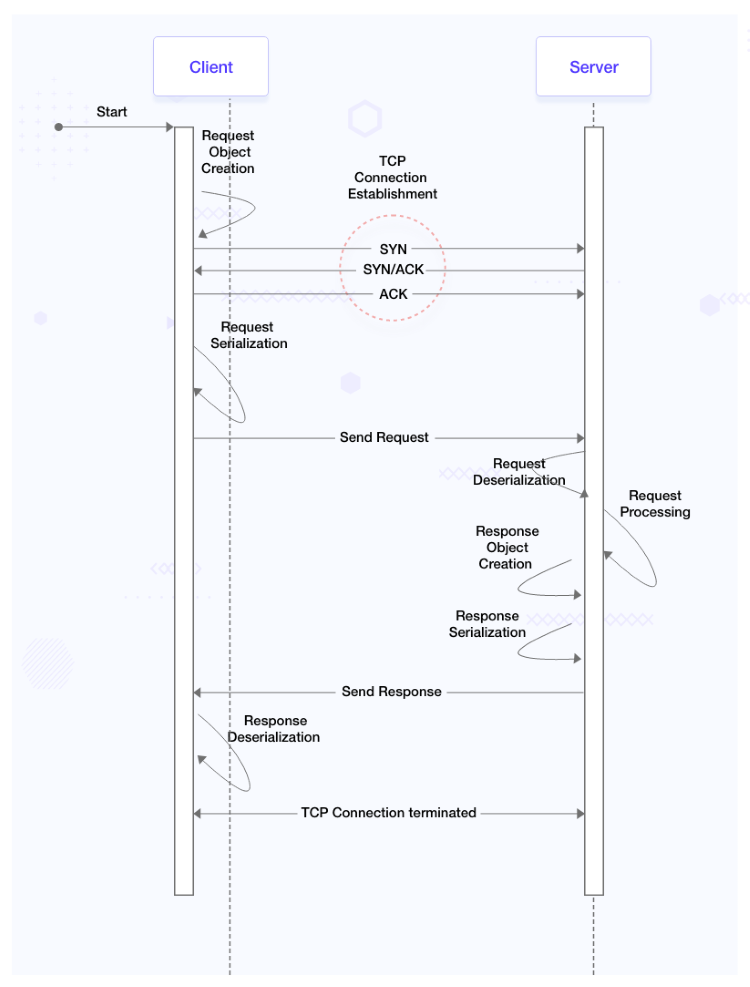

Communication protocol: HTTP 1.1 vs HTTP/2

- REST is based on HTTP 1.1; communication between a REST client and server would require an established TCP connection which in turn has a 3-way handshake involved. When we get a response from the server upon sending a request from the client, the TCP connection does not exist after that. A new TCP connection needs to be spun up in order to process another request. This establishment of a TCP connection on each and every request adds up to the latency.

- So gRPC which is based on HTTP 2 has encountered this challenge by having a persistent connection. We must remember that persistent connections in HTTP 2 are different from that in web sockets where a TCP connection is hijacked and the data transfer is unmonitored. In a gRPC connection, once a TCP connection is established, it is reused for several requests. All requests from the same client and server pair are multiplexed onto the same TCP connection.

-

Just worrying about data and logic: Code generation being a first-class citizen

- Code generation features are native to gRPC via its in-built protoc compiler. With REST APIs, it’s necessary to use a third-party tool such as Swagger to auto-generate the code for API calls in various languages.

- In the case of gRPC, it abstracts the process of marshaling/unmarshalling, setting up a connection, and sending/receiving messages; what we all need to worry about is the data that we want to send or receive and the logic.

-

Transmission speed

- Since the binary format is much lighter than JSON format, the transmission speed in the case of gRPC is 7 to 10 times faster than that of REST.

| Feature | REST | gRPC |

|---|---|---|

| Communication Protocol | Follows request-response model. It can work with either HTTP version but is typically used with HTTP 1.1 | Follows client-response model and is based on HTTP 2. Some servers have workarounds to make it work with HTTP 1.1 (via rest gateways) |

| Browser support | Works everywhere | Limited support. Need to use gRPC-Web, which is an extension for the web and is based on HTTP 1.1 |

| Payload data structure | Mostly uses JSON and XML-based payloads to transmit data | Uses protocol buffers by default to transmit payloads |

| Code generation | Need to use third-party tools like Swagger to generate client code | gRPC has native support for code generation for various languages |

| Request caching | Easy to cache requests on the client and server sides. Most clients/servers natively support it (for example via cookies) | Does not support request/response caching by default |

Again for the time being gRPC does not have browser support since most of the UI frameworks still have limited or no support for gRPC. Although gRPC is an automatic choice in most cases when it comes to internal microservices communication, it is not the same for external communication that requires UI integration.

Now that we have done a comparison of both the frameworks: gRPC and REST. Which one to use and when?

- In a microservice architecture with multiple lightweight microservices, where the efficiency of data transmission is paramount, gRPC would be an ideal choice.

- If code generation with multiple language support is a requirement, gRPC should be the go-to framework.

- With gRPC’s streaming capabilities, real-time apps like trading or OTT would benefit from it rather than polling using REST.

- If bandwidth is a constraint, gRPC would provide much lower latency and throughput.

- If quicker development and high-speed iteration is a requirement, REST should be a go-to option.

gRPC Concepts

Load balancing

Even though the persistent connection solves the latency issue, it props up another challenge in the form of load balancing. Since gRPC (or HTTP2) creates persistent connections, even with the presence of a load balancer, the client forms a persistent connection with the server which is behind the load balancer. This is analogous to a sticky session.

We can understand the challenge via a demo & the code and deployment files for the same are present in this repository.

From the above demo code base, we can find out that the onus of load balancing falls on the client. This leads to the fact that the advantage of gRPC i.e. persistent connection does not exist with this change. But gRPC can still be used for its other benefits.

Read more about load balancing in gRPC.

In the above demo code-base, only a ‘round-robin’ load balancing strategy is used/showcased. But gRPC does support another client-based load balancing strategy OOB called ‘pick-first’.

Furthermore, custom client-side load balancing is also supported.

Clean contract

In REST, the contract between the client and server is documented but not strict. If we go back even further to SOAP, contracts were exposed via wsdl files. In REST we expose contracts via Swagger and other provisions. But the strictness is lacking, we cannot for sure know if the contract has changed on the server’s side while the client code is being developed.

With gRPC, the contract is shared with both the client and server either directly via proto files or generated stub from proto files. This is like making a function call but remotely. And since we are making a function call we exactly know what we need to send and what we are expecting as a response. The complexity of making connections with the client, taking care of security, serialization-deserialization, etc are abstracted. All we care about is the data.

Lets consider the code base for Greet App.

The client uses the stub (generated code from proto file) to create a client object and invoke remote function call:

import greetpb "github.com/infracloudio/grpc-blog/greet_app/internal/pkg/proto"

cc, err := grpc.Dial("<server-address>", opts)

if err != nil {

log.Fatalf("could not connect: %v", err)

}

c := greetpb.NewGreetServiceClient(cc)

res, err := c.Greet(context.Background(), req)

if err != nil {

log.Fatalf("error while calling greet rpc : %v", err)

}

Similarly, the server too uses the same stub (generated code from proto file) to receive request object and create response object:

import greetpb "github.com/infracloudio/grpc-blog/greet_app/internal/pkg/proto"

func (*server) Greet(_ context.Context, req *greetpb.GreetingRequest) (*greetpb.GreetingResponse, error) {

// do something with 'req'

return &greetpb.GreetingResponse{

Result: result,

}, nil

}

Both of them are using the same stub generated from the proto file greet.proto.

And the stub was generated using ‘proto’ compiler and the command to generate is this.

protoc --go_out=. --go_opt=paths=source_relative --go-grpc_out=. --go-grpc_opt=paths=source_relative internal/pkg/proto/*.proto

Security

gRPC authentication and authorization works on two levels:

- Call-level authentication/authorization is usually handled through tokens that are applied in metadata when the call is made. Token based authentication example.

- Channel-level authentication uses a client certificate that’s applied at the connection level. It can also include call-level authentication/authorization credentials to be applied to every call on the channel automatically. Certificate based authentication example.

Either or both of these mechanisms can be used to help secure services.

Middlewares

In REST, we use middlewares for various purposes like:

- Rate limiting

- Pre/Post request/response validation

- Address security threats

We can achieve the same with gRPC as well. The verbiage is different in gRPC, they are referred as ‘interceptors’ but they do similar activities.

In the middlewares branch of the greet_app code base, we have integrated logger and Prometheus interceptors.

Look how the interceptors are configured to use Prometheus and logging packages in middleware.go.

// add middleware

AddLogging(&zap.Logger{}, &uInterceptors, &sInterceptors)

AddPrometheus(&uInterceptors, &sInterceptors)

But we can integrate other packages to interceptors for purposes like preventing panic and recovery (to handle exceptions), tracing, even authentication, etc.

Supported middlewares by gRPC framework.

Packaging, versioning and code practices of proto files

Packaging

Let’s follow the packaging branch.

First start with Taskfile.yaml, the task gen-pkg says protoc --proto_path=packaging packaging/*.proto --go_out=packaging. This means protoc (the compiler) will convert all files in packaging/*.proto into its equivalent Go files as denoted by flag --go_out=packaging in the packaging directory itself.

Secondly in the ‘processor.proto’ file, 2 messages have been defined namely ‘CPU’ and ‘GPU’. While CPU is a simple message with 3 fields of in-built data types, GPU message on the other hand has an additional custom data type called ‘Memory’ along with in-built data types same as CPU message. ‘Memory’ is a separate message and is defined in a different file altogether. So how do you use the ‘Memory’ message in the ‘processor.proto’ file? By using import.

syntax = "proto3";

package laptop_pkg;

option go_package = "/pb";

import "memory.proto";

message CPU {

string brand = 1;

string name = 2;

uint32 cores = 3;

}

message GPU {

string brand = 1;

string name = 2;

uint32 cores = 3;

Memory memory = 4;

}

syntax = "proto3";

package laptop_pkg;

option go_package = "/pb";

message Memory {

enum Unit {

UNKNOWN = 0;

BIT = 1;

BYTE = 2;

KILOBYTE = 3;

MEGABYTE = 4;

GIGABYTE = 5;

}

uint64 value = 1;

Unit unit = 2;

}

Even if you try to generate a proto file by running task gen-pkg after mentioning import, it will throw an error. As by default protoc assumes both files memory.proto and processor.proto to be in different packages. So you need to mention the same package name in both files. The optional go_package indicates the compiler to create a package name as pb for Go files. If any other language-d proto files were to be created, the package name would be laptop_pkg.

Versioning

There can be two kinds of changes in gRPC breaking and non-breaking changes:

- Non-breaking changes include adding a new service, adding a new method to a service, adding a field to request or response proto, and adding a value to enum

- Breaking changes like renaming a field, changing field data type, field number, renaming or removing a package, service or methods require versioning of services

- In order to distinguish between same name messages or services across proto files, optional packaging can be implemented.

Code practices

- Request message must suffix with request

CreateUserRequest. - Response message must suffix with request

CreateUserResponse. - In case the response message is empty, you can either use an empty object

CreateUserResponseor use thegoogle.protobuf.Empty. - Package name must make sense and must be versioned, for example: package

com.ic.internal_api.service1.v1.

Tooling

gRPC ecosystem supports an array of tools to make life easier in non-developmental tasks like documentation, rest gateway for a gRPC server, integrating custom validators, linting, etc. Here are some tools that can help us achieve the same:

- protoc-gen-grpc-gateway — plugin for creating a gRPC REST API gateway. It allows gRPC endpoints as REST API endpoints and performs the translation from JSON to proto. Basically, you define a gRPC service with some custom annotations and it makes those gRPC methods accessible via REST using JSON requests.

- protoc-gen-swagger — a companion plugin for grpc-gateway. It is able to generate swagger.json based on the custom annotations required for gRPC gateway. You can then import that file into your REST client of choice (such as Postman) and perform REST API calls to the methods you exposed.

- protoc-gen-grpc-web — a plugin that allows our front end to communicate with the backend using gRPC calls. A separate blog post on this coming up in the future.

- protoc-gen-go-validators — a plugin that allows to define validation rules for proto message fields. It generates a

Validate() errormethod for proto messages you can call in Go to validate if the message matches your predefined expectations. - protolint - a plugin to add lint rules to proto files.

Testing using Postman

Unlike testing REST APIs with Postman or any equivalent tools like Insomnia, it is not quite comfortable to test gRPC services.

Note: gRPC services can also be tested from CLI using tools like evans-cli. But for that reflection needs (if not enabled the path to the proto file is required) to be enabled in gRPC servers. This compare link shows the way to enable reflection and how to enter into evans-cli repl mode. Post entering repl mode of evans-cli, gRPC services can be tested from CLI itself and the process is described in evans-cli GitHub page.

Postman has a beta version of testing gRPC services.

Here are the steps of how you can do it:

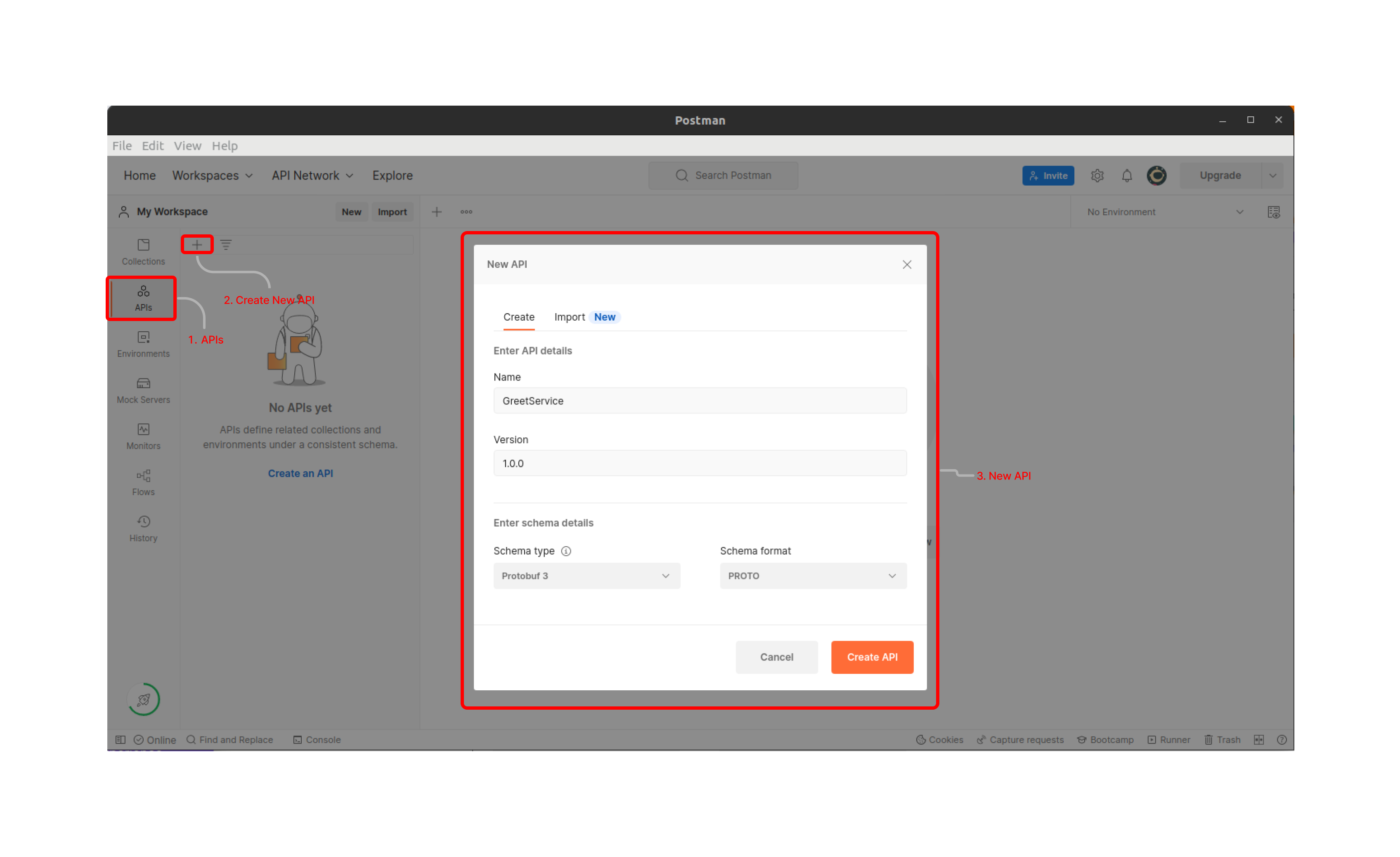

-

Open Postman, goto ‘APIs’ in the left sidebar and click on ‘+’ sign to create new api. In the popup window, enter ‘Name’, ‘Version’, and ‘Schema Details’ and click on create [unless you need to import from some sources like GitHub, Bitbucket].

-

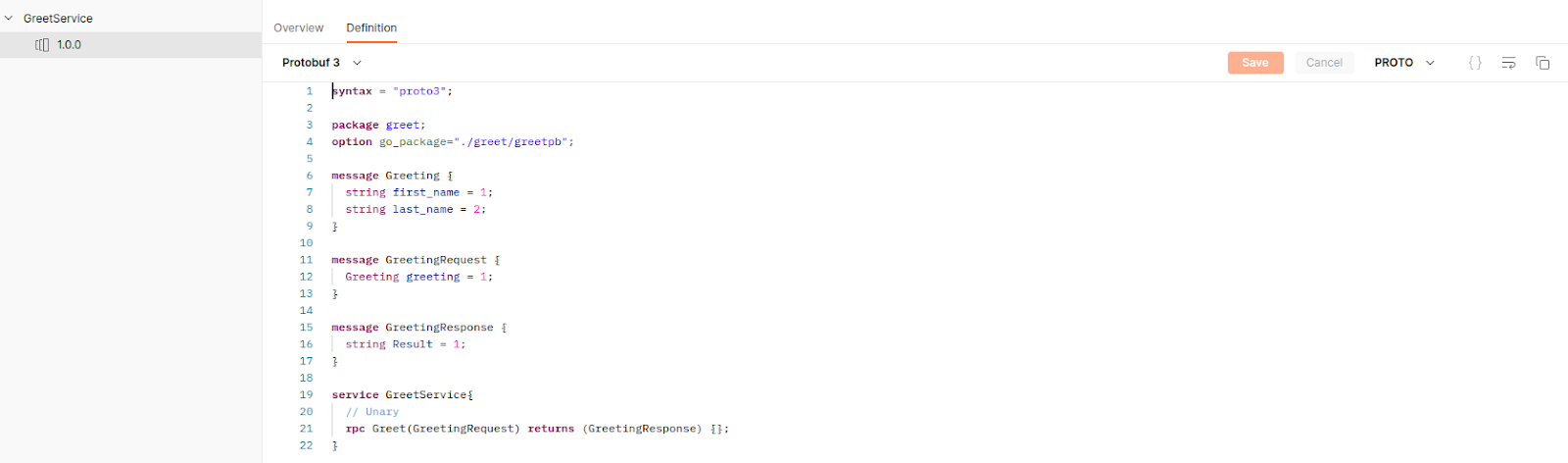

Once Your API gets created then go to definition and enter your proto contract.

-

Remember importing does not work here, so it would be better to keep all dependent protos at one place.

-

The above steps will help to retain contracts for future use.

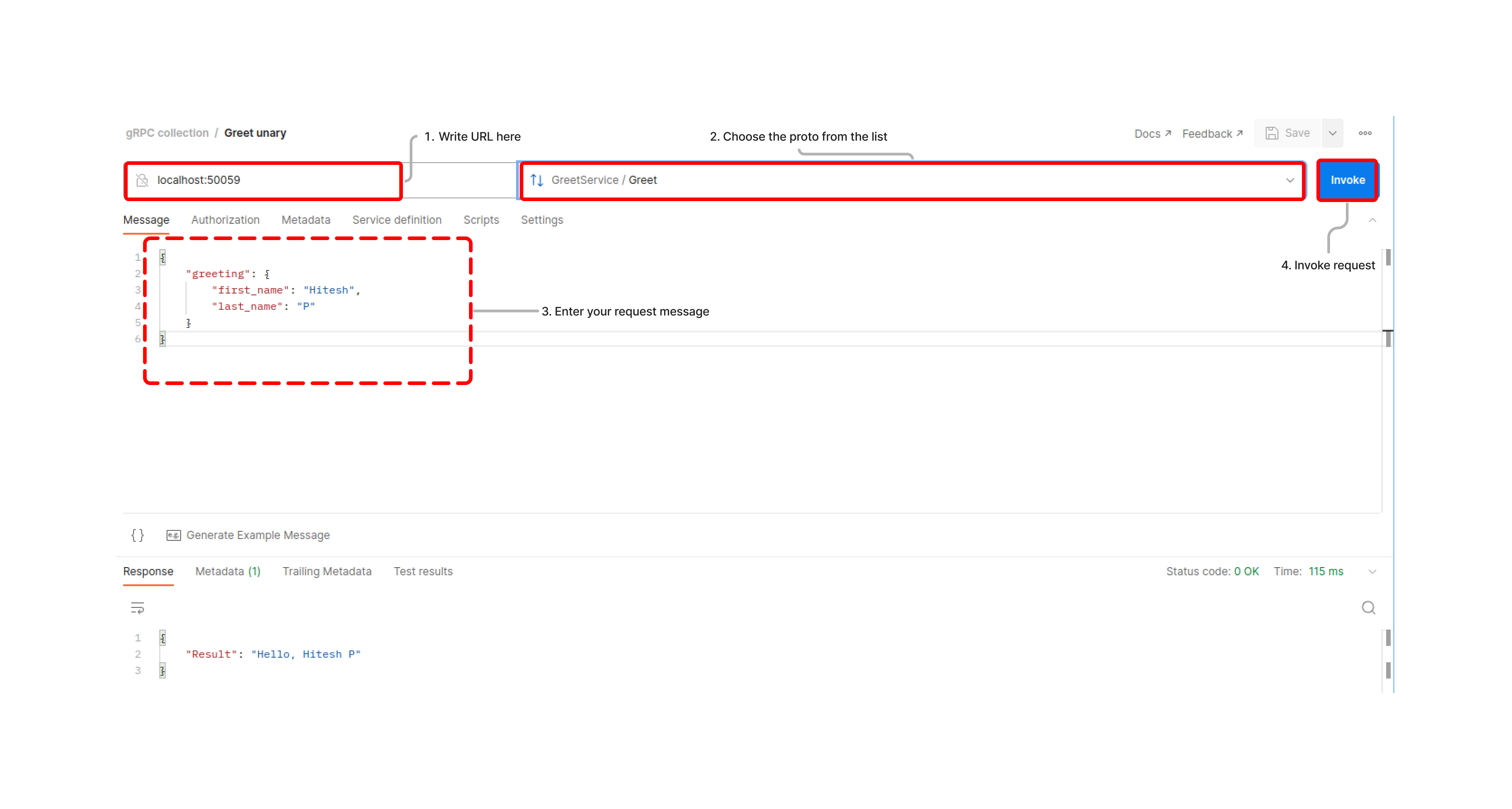

-

Then click on ‘New’ and select ‘gRPC request’, enter the URI and choose the proto from the list of saved ‘APIs’ and finally enter your request message and hit ‘Invoke’

In the above steps we figured out the process to test our gRPC APIs via Postman. The process to test gRPC endpoints is different from that of REST endpoints using Postman. One thing to remember is that while creating and saving proto contract as in 5, all proto message and service definitions need to be in the same place. As there is no provision to access proto messages across versions in Postman.

Conclusion

In this post, we developed an idea about RPC, drew parallels with REST as well as discussed their differences, then we went on to discuss an implementation of RPC i.e. gRPC developed by Google.

gRPC as a framework can be crucial, especially for microservice-based architecture for internal communication. It can be used for external communication as well but will require a REST gateway. gRPC is a must for streaming and real-time apps.

The way Go is proving itself as a server-side scripting language, gRPC is proving itself as a de-facto communication framework.

That’s it folks! Feel free to reach out to Hitesh/Pranoy for any feedback and thoughts on this topic.

Looking for help with building your DevOps strategy or want to outsource DevOps to the experts? Learn why so many startups & enterprises consider us as one of the best DevOps consulting & services companies.

Further reads

Stay updated with latest in AI and Cloud Native tech

We hate 😖 spam as much as you do! You're in a safe company.

Only delivering solid AI & cloud native content.