Extended Team for Building Kubernetes DR Solution

Customer Overview

The customer is US based, a venture-backed company being a market leader in Kubernetes backup and disaster recovery solutions.

They help enterprises overcome Day 2 Data management challenges to run applications on Kubernetes confidently. A data management platform purpose-built for Kubernetes provides enterprise operations teams with an easy-to-use, scalable, and secure system for backup/restore, disaster recovery, and application mobility with unparalleled operational simplicity.

Context & Challenge

The client’s application is built around Kubernetes, which has two offerings open source and commercial. They wanted someone who could help them with the maintenance and enhancements of both offerings.

This led to a search for people with expertise in cloud native technologies. A team that could help build an application around Kubernetes and write the product that manages Kubernetes storage and new features.

The requirements were beyond just consulting services. One of the points they kept in mind while making decisions was how good the collaboration was.

During the initial phase, we did some challenges the client gave that were the basis of the evaluation. Some of the challenges were:

- Add/update blueprints for databases.

- Ensuring best practices around backup.

- Collaboration with other team members.

- Setting up a community example of the product.

Considering the work we’ve done so far, customer requirements turn into the product team, where the area of work expanded.

We started working on things like developing the testing framework, improving licensing features, logging and debugging tooling, and establishing product presence in other marketplaces like Google, Rancher, etcetera.

Solutions deployed

Infracloud’s scope is not restricted to providing service but building better products together. In detail, we will see how we shaped the development and established community presence.

Extending Support For More Databases

Many different databases organizations are using, each catering to their own need. This keeps pushing product to support more and more databases. Extending support does not mean it gets things done but rather anticipating future changes as well. We did thorough research on best practices and understood how the community prefers it and what should be the suggested backup format. Also, we checked if there were any changes to existing blueprints.

Testing Framework

In the field of backup and recovery, it’s become more crucial to provide reliable solutions and keep building without any hiccups. This raises a need for a testing framework.

At that time, the open source offering lacked an end-to-end testing framework. Unit tests were present, but to check the complete lifecycle, i.e., Deploy product -> Backup data -> Restore data-> Verify data, the complete process was not automated.

On the other hand, the product team wanted to iterate faster to build new features, so experimenting with the feature was getting done using a second product infrastructure for testing, but it was a tedious process and required manual intervention.

We ended up creating a standalone framework. It’s easier said than done because of the backup and recovery (application-level data management) of different applications. The framework needs to be generic so that it can easily adhere to different blueprints. Also, it should perform all the steps similar to how it is defined in the documentation. It would verify the documentation vs application behavior.

Once the framework got developed, it established confidence within the team and in product reliability.

Product Licensing Improvement

Licensing in any commercial product is the backbone of customer experience. Let’s take an example of a backup & recovery application. When your license expires due to a few days’ delays in renewal of it. Unfortunately, something terrible happened to your application within the same period. Your mission-critical application needs to return to a good state, but your recovery solution doesn’t allow you to do that due to an invalid license. A few minutes of inconsistency could cause a massive loss in your business value.

Infracloud helped the customer establish notification and renewal periods in place. Some additional features to avoid instances like the above, the renewal mechanism would stop some features in the product but not all for 40 days of notification/renewal period.

Product Features Development

From supporting and enabling end users, we have developed new features to improve overall product capabilities:

- Auto-Sidecar Injection: Automated K8s deployment instrumentation for generic backup.

- Replacement of Internal Data Transfer tool: For better performance and security, we switched to Kopia in the open source offering.

- Managed Services and Operator Integrations: Integrated various operators and managed services such as PGO, K8ssandra, RDS, and KubeVirt, enabling successful PoCs and winning customers.

- Product Licensing improvement: Helped customers establish notification and renewal systems with graceful feature deactivation.

To name a few. These developments have significantly impacted the product and improved the user experience.

Debugging Tooling Enhancement

Where the user is the developer, it’s essential to improve debugging tooling. If you can’t see what is happening, then it’s all chaos. The users were facing problems sharing debugging information with to support team. The way logs and debug info was stored was not efficient and effective in terms of finding what you need, especially when you have multiple service logs coming into one file. We differentiated the process in a manner so that each service has its separate files and dumps differently. So it becomes easier for the support team and users.

CI/CD Migration

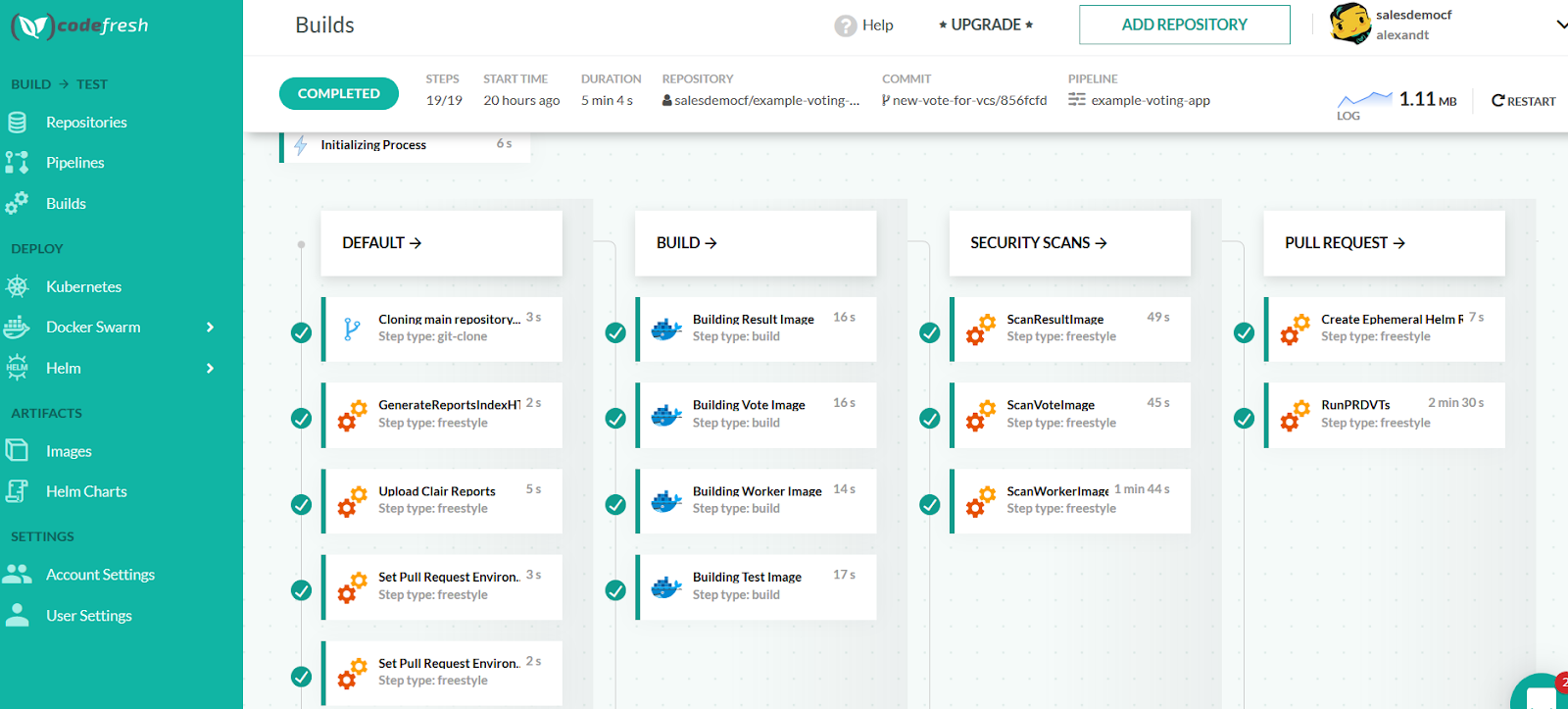

The customer was running the CI/CD pipeline on Shippable, and it was being acquired by another company, and the team decided to shift to Codefresh pipeline. It was not a straightforward process. There were tons of pipelines for each kind of test and environment-specific tests, for example, GKE, EKS, etcetera. We were involved with the team to come up with a migration plan. We decided to have all the pipelines running on a new one and disable the pipeline from shippable.

There were quite a few noticeable things in both pipeline implementations. Shippable spins the VMBox, and the Codefresh is built on top of Argo. Differences come down to running tests inside the docker environment. This brings some new refactoring; for example, the customer used bash scripts heavily to do stuff, and we need to switch to YAML. Codefresh is more Kubernetes native implement.

We had to overcome the issues like running parallel pipelines, internal relationships between them, and understanding the appropriate requirements of resources.

In a rough idea, we had the below flow in the pipeline.

Shippable

VM -> Build Container -> Test Cases -> Initial Test Pass -> Artifacts -> Few Test cases.

Codefresh

Already containerize environment -> Run tests -> Artifacts -> Few Test cases.

As you see, we had to take care of the container environment and identify a way to run tests with the build within the container. Also, take care of the dependent pipeline, aka the relationship between them, how one pipeline triggers another one or depends on.

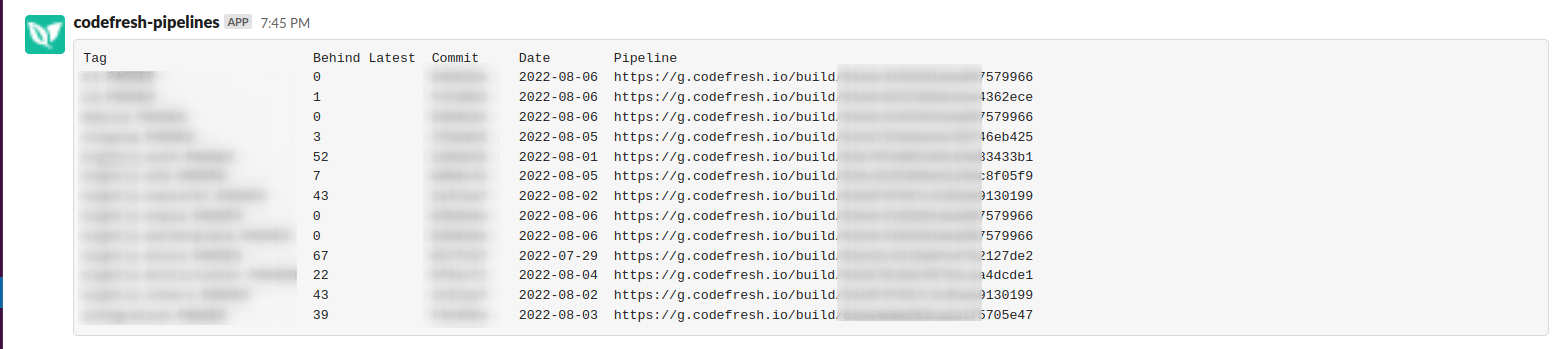

We required some features that were missing in Codefresh and we helped implement features in Codefresh pipeline. Some of the things we develop to ease the customer experience from helping them develop a slack bot to help inform us about pipeline status right in the slack channel.

Open Source Work

One of the offerings is open source, and because of it, there has always been a contribution to other projects, be it proposals on new features or implementing prototypes and working with different groups in Kubernetes. One of the groups is Data Protection Working Group in the Kubernetes community, where the team helped draft proposals for Kubernetes enhancements and implemented prototypes to enable more useful data protection support.

Establish Product Presence

There are multiple marketplaces where we can add the product to ensure its visibility when people are looking for a similar solution. It’s an excellent way to give a user ease to select and install within the marketplace of their choice. The team went through the whole procedure to ensure the product exists in other marketplaces like Google Marketplace and Rancher Marketplace.

Benefits

- We helped establish the testing framework, which increased the team’s productivity in the overall process.

- We were able to extend the functionality and support for more databases which increased the scope of the product and the reliability of existing blueprints.

- We helped customer submit new features and suggestions to the open-source community.

- We were able to establish an open source and well as marketplace presence across Google Marketplace and Rancher Marketplace.

- We helped improve user experience by renewal mechanism and debugging tooling improvement.

Why InfraCloud

-

We are more than just service providers; we help our customers build better products from day 1 of engagement. We’re helping customers shape products from an engineering perspective & business value.

-

Also, we are a premier technology company with DevOps engineers who have pioneered DevOps with Container, Container Orchestration, Cloud Platforms, DevOps, Infrastructure Automation, SDN, and Big Data Infrastructure solutions.

-

Our deep expertise and focus in these areas enable our customers to build better software faster.

-

We have 50 Kubernetes certified specialists that you can trust - CKA, CKAD & CKS.

-

We are also one of the cloud native technology thought leaders, with speakers and authors contributing to global CNCF conferences.

Got a Question or Need Expert Advise?

Schedule a 30 mins chat with our experts to discuss more.