Build Container Images with Kaniko

Introduction

It is a common practice to build customized docker images and then push it to image repositories for future (re)use. The most common way of building images is by the use of Dockerfile which needs access to docker daemon. The access to docker daemon presents a few challenges and limitations especially around security (Open sockets) and ease of use (Docker in docker anyone?). This becomes an even more important factor for example if you want to build inside a Kubernetes cluster or inside the executor slaves created by the CI/CD pipeline.

To overcome this challenge a bunch of tools were written which can read the instructions in Dockerfile and build container images – just without needing the Docker daemon. The notable once and that I am aware of are Buildah from project atomic, Kaniko from Google and Img written by Jess Frazelle. In this tutorial, we will look at Kaniko and build a sample image which will be pushed to AWS’s ECR repository.

Kaniko

Kaniko is a tool a daemonless container image builder. It can run inside Kubernetes cluster as a docker image to build docker images. It also suppors running in google container builder or from within gVisor. For running locally you just need docker engine and gcloud installed. For details about the inner working of Kaniko, checkout the blog post.

Kaniko is run as a container itself, and needs the following information to build a docker image:

- The path to Dockerfile.

- The path to build context (workspace) directory. Build context directory contains all the necessary artefacts that are required while building an image.

- Destination/URL of the repository where the image is to be pushed.

We will install Kaniko and build an image from a local Dockerfile and push it to AWS ECR.

Prerequisites

- Need access (push/pull) to an ECR repository

- AWS cli installed and configured on your local linux box. You can install AWS cli by referring this user guide.

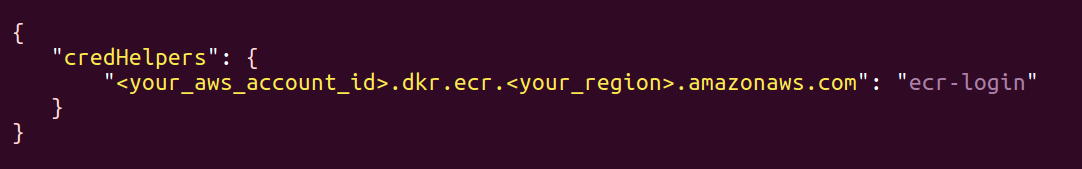

- The

$HOME/.aws/credentialsfile should be correctly set with “aws_access_key_id” and “aws_secret_access_key”. - Update

$HOME/.docker/config.jsonfile to have your ECR repo:

Install and run locally

- Download source from Github:

git clone https://github.com/GoogleContainerTools/kaniko.git - To pull the kaniko container image named “executor” and load it into the locally running docker daemon, execute:

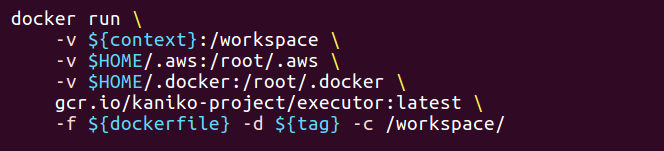

make images - While running locally, it requires us to run the “kaniko container”. To push images to ECR you need to mount the aws credentials file ($HOME/.aws/credentials) and docker config.json ($HOME/.docker/config.json) into the kaniko container inside which the image would be built. So, we need to edit the “run_in_docker.sh”. The docker command inside the “run_in_docker.sh” should look like this:

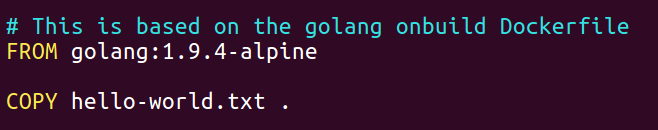

Kaniko requires a build context (workspace) directory which would have the necessary artifacts required at the time of building an image. We will create a workspace directory viz. $HOME/workspace. We will use the Dockerfile below, which copies a simple file hello-world.txt into the docker image. Copy this Dockerfile into your workspace.

Now, that the basic setup is in place. Lets, build an image and push it to an ECR repo.

Build and push image

Running the command: run_in_docker.sh /workspace/Dockerfile /workspace/ aws_account_id.dkr.ecr.region.amazonaws.com/my-test-repo:kaniko

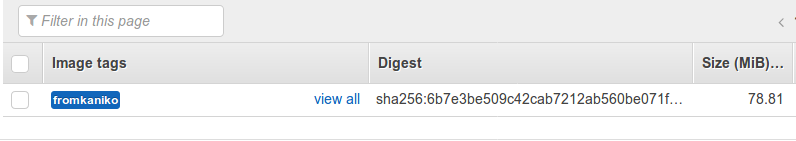

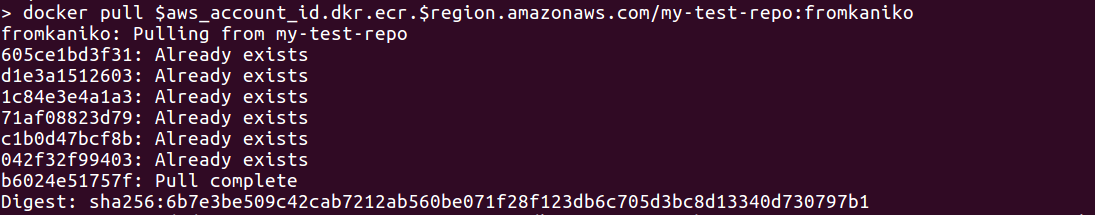

Verifying image upload

To verify, if the image upload was sucessful, check the AWS ECR dashboard or pull this image from the ECR repo (make sure you have done a docker login for ECR repo)

AWS ECR dashboard:

Image pull:

More interesting stuff:

- There are other tools which build container images and a quick comparison of them: https://github.com/GoogleContainerTools/kaniko#comparison-with-other-tools

- You can use Skaffold along with Kaniko to build and deploy images in a cluster, this optimizes the developer workflow quite a bit and is a nice way to run things on cluster: https://medium.com/google-cloud/skaffold-and-kaniko-bringing-kubernetes-to-developers-a43914777af9

Looking for help with your cloud native journey? do check our cloud native consulting capabilities and expertise to know how we can help with your transformation journey.

Stay updated with latest in AI and Cloud Native tech

We hate 😖 spam as much as you do! You're in a safe company.

Only delivering solid AI & cloud native content.